Why Computer Networks?

Understanding the Importance of Computer Networks

A while ago, I overheard a student lamenting that they did not understand why the university was requiring them to study a computer networks course (computer networks is a core course for undergraduate computer science majors at many institutions). That student saw no immediate value in taking that course and was confused about its potential benefits. One might ask why course instructors did not motivate the subject to the student. Course instructors are often so pressed for time to cover the material that they do not dedicate sufficient time to motivating the subject. Many instructors teach this subject every semester and may overlook that what is evident to them might not be the case for students who are encountering the subject for the first time. This post is my effort to explain why computer networks is an important subject area.

From Mars missions to streaming your favourite show, computer networks are the foundational problem-solvers that power our interconnected digital lives.

If today you are not in a mood to read, following is a podcast for you to listen and enjoy. We created this podcast using Google’s NotebookLM using our text data as input to it.

What are Computer Networks?

A computer network is a collection of hosts that are connected in a strategic way so that the computational devices on the network can communicate with each other efficiently to fulfil multiple purposes (both pleasure and business-oriented). The global Internet is an example of a ubiquitous network upon which our modern economy relies. The subject of computer networks is concerned with architectural and numerous operational challenges, and their solutions. In the following, we will discuss some key reasons why computer networks is a foundational subject area.

Problem-solving Through the Lens of Computer Networks

The subject of computer networks has a rich history spanning many decades. There were many challenges for which many competing solutions were devised, some of which survived the test of time. If you approach the subject of computer networks as a means to understand specific problems and their solutions, our hope is that when you encounter similar challenges elsewhere in your life (probably at work), you will have a good starting point towards the solution instead of starting from scratch. The computer networks field is full of fascinating solutions to some rather complex problems. We list a few of them below to serve as supporting examples for our point.

There are many situations where the thundering-herd phenomenon can overwhelm a resource (for example, a server). A thundering-herd is a problem where multiple requests arrive as a burst to a service, spiking the load onto the service and potentially causing numerous issues such as increased latency for customers, or server crashes due to resource exhaustion or thrashing. De-synchronisation of the incoming requests is one of the solutions to deal with this problem. There is an exponential back-off algorithm that originates from the early days of shared Ethernet-based wires. If more than one customer transmitted data at the same time on a shared wire, their packets would collide, causing both data signals to become mingled and unintelligible. Even if they retry, the chances are they would re-collide, resulting in a medium unable to perform any useful work. The exponential back-off algorithm is a simple yet elegant algorithm that helps break this synchrony and resolve such problematic situations. This algorithm is applicable for many use cases.

There is a protocol in networking called TCP, and one of its jobs is to avoid over-crowding the network with data and to recover from congestion if it occurs. TCP’s congestion avoidance and control algorithms use rather rudimentary signals to detect the onset of congestion and dynamically estimate a good sending rate. This ensures we use the available end-to-end bandwidth efficiently, share bandwidth fairly with all other flows that cross our path, and avoid network congestion. We can learn how a complex control system can be built using simple input signals. Our real world is messy, and often we have to live with partial or incomplete information yet still need to make important decisions. In our view, the way TCP works is an excellent case study to see how such a solution might function.

Consistent hashing is an algorithm that helps distribute client load to an available service fleet where the fleet size changes over time. Consistent hashing ensures that only a small fraction of request-to-server mappings change when a new server is inducted or an existing server leaves the fleet. Many network devices, such as load-balancers, use this algorithm. Once again, the consistent hashing algorithm is widely applicable.

Packet-switched computer networks can become congested because no a priori resources are reserved (as is the case for circuit-switching). Queuing theory is an elegant analysis tool to make sense of these situations in terms of expected packet delays and queue sizes. Once again, queuing theory is applicable in many practical use cases in the real world.

The global Internet and the French Minitel (now retired) are two examples of computer networks. The first one survived and is thriving, while the latter ceased to exist circa 2012. The study of computer networks, using the global Internet and its architecture, answers many questions on how to architect a complex system where future needs (for applications and underlying technology) were not precisely known at the time of design. For system designers, it serves as a great case study.

The above list is just a sample and is not exhaustive. We hope it is clear that by studying computer networks, we observe some of the finest algorithms in action. Our aim is for students to be equipped with new tools in their repertoire to be employed for similar problems instead of reinventing the wheel.

Using the Global Internet as a Case Study

The global Internet is one of the largest human artefacts spanning the globe. Its hourglass-shaped architecture, where the middle part – the IP protocol – connects the upper (application-side) and bottom (hardware-side) halves of the network stack. Designing such a system is complex, involving heavy use of abstractions to manage this complexity. Networking layering, which modularises different parts of the network stack, helps the designers to keep layer-specific complexities contained within a layer while exporting a refined interface with neighbouring layers.

Providing low latency to clients is often a requirement, but if a service is far away, the propagation delay (which is a function of distance) increases, even though signals travel close to the speed of light. How do we deal with such physical constraints? How do major services like Netflix, YouTube, and Zoom-like services satisfy their customers’ needs? The subject of computer networks discusses all this in detail.

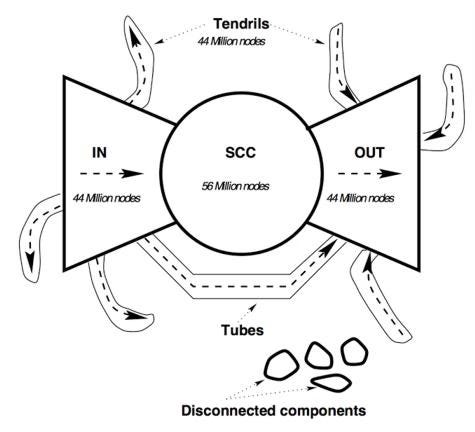

Complex systems like the Internet exhibit emergent behaviours, where the system collectively appears to function in a manner different from its constituent parts. Understanding such behaviour has been a fascinating sub-discipline of the field, with many prestigious networking conferences (for example, ACM’s IMC) dedicated to collecting measurements and making sense of networked systems. This sub-field has kept generations of computer network researchers curious and busy.

Innovations in Data Centres and at the Edge

Most of our modern digital services, such as Google Search, Maps, and WhatsApp, serving billions of concurrent users, require special computational facilities known as data centres. A typical data centre can house hundreds of thousands of servers to meet computational needs. There has been a flurry of innovations inside data centres and in the networks that connect multiple data centres. Some of these innovations include:

Clos (pronounced as KLO) networks, which provide redundant paths between a source and destination.

Software-Defined Networking (SDN) for efficient management and high utilisation of the network.

Domain-specific fleets of hardware (such as TPUs and GPUs) for AI/ML workloads.

Special variants of the TCP protocol amenable to data centre traffic.

Many hyper-scalers (such as Google, Facebook, AWS, etc.) have deployed their own long-haul optical-fibre links to connect their globally dispersed data centres. This approach is quite different from how remote sites were connected in the past, where a handful of top-tier ISPs connected others to the Internet core. This new style of networking provides an enormous edge to hyper-scalers in terms of the speed of innovation and satisfying customer needs.

Many tasks traditionally performed by the main CPU can now be delegated to the NIC (Network Interface Card).

Inside a data centre, a running virtual machine can be moved to a different physical server without breaking any client connections.

On the edge of the network (close to the end customers), the number of hosts has exploded over the years, with IoT devices (such as IP cameras, smart air conditioners, etc.) only adding to this growth. Efficiently serving these clients requires care. For example, how do we ensure that time-sensitive data from an IoT device reaches a service in a timely manner?

Role of Networking inside the Processor and Domain-Specific Infrastructure

The number of cores inside a processor is increasing, and today it is hard to find a processor with a single core. These cores need to communicate with each other, with memory, and with I/O devices. Special on-chip networks are used for this purpose. While these networks have some of their own characteristics (for example, we often ignore propagation delay inside the processor due to short distances), many networking concepts are applicable there. Similarly, AI/ML requires a special, domain-specific fleet of hardware such as TPUs and GPUs. Most workloads are too large to fit on any single hardware, and often multiple such hardware units are used. With the exponential surge in the computational needs for ML training and inference, special focus has been given to the networks that connect them.

Security: A Cross-Cutting Concern

Hardly any day goes by without us hearing about a security breach of a service or an attack on some critical infrastructure such as national grids or water-treatment plants, or denial-of-service attacks or ransomware attacks. Some examples are as follows:

A major data breach involving 16 billion leaked passwords from various tech giants and government portals was recently uncovered, according to cybersecurity experts. The leaked credentials include login information from Apple, Google, Telegram, and even government websites. This breach is described as the largest password leak in history, with researchers suggesting it may be part of a larger data leak, according to Cybernews.

Cloudflare defended a record-breaking 7.3 Tbps DDoS attack on the Internet in June 2025.

In 2024, a series of ransomware attacks significantly impacted US hospitals, causing widespread disruptions to patient care and financial losses. These attacks, often involving the encryption of electronic health records and other critical systems, forced hospitals to rely on manual processes, divert emergency services, and delay or reschedule procedures. The attacks highlighted the vulnerability of the healthcare sector to cyberattacks and the urgent need for enhanced cybersecurity measures.

Do you see the pattern above? Since most services are accessible over the Internet, attackers use the network to remotely attack systems. Understanding and dealing with such attacks requires having a good grasp of computer networks concepts.

Defending Personal Liberties

Increasingly, authorities around the world are placing curbs on specific services (for example, in some countries, it is prohibited to use X (Twitter) or YouTube). Understanding how such curbs work technically and what an individual can (or cannot) do also requires computer networking concepts.

Extending Human reach Beyond this Planet!

I am sure you would have seen some dazzling pictures sent by space telescopes. Ability to communicate to remote machines makes it possible.

NASA has sent multiple autonomous rovers on the planet Mars on different scientific missions. The distance between Mars and Earth varies over time due to their relative orbits. Mars is about 34 million miles to 250 million miles away from earth. How does NASA control on Earth communicate with the Mars rovers? Well you guessed it that via some kind of radio communication.

One-way propagation delay will vary from 3 to 22 minutes from earth to the Mars rover. By the way that also means that if someday humans could live on Mars, they will not be able to talk to their folks on Earth in real-time due to excessive round-trip time (6 to 44 minutes)! This is my favourite example to really bring the point home about the nature of propagation delay in networks.

Scientific discoveries by the Hubble and James Webb telescopes are meaningful because those telescopes can communicate with their Earth mission control and could send them amazing pictures and other data to extend our understanding of this universe!

Some Unusual Places where Network Plays a Role

There are many use cases where network is used one way or the other. Some examples are as follows:

Fly-by-wire networked system in modern jet liners

Many peripherals in the modern car (example: pushing the brake pedal to actually sending the signals to the brakes to reduce speed)

GPS signals and many appliances using it

NFC communication to enable payment by smart phones

Like the usual network, such specialised networks are also susceptible to security concerns. Example: during recent conflicts in the middle east, commercial jet liners had difficulty in navigation due to GPS jamming and spoofing.

Many Use Cases When You Need Networking Deep Dives!

There are many use cases where you need to have sufficient knowledge of computer networking concepts and operations to solve issues. A few examples are:

You encounter slower Internet speeds at home. To understand what is going on, you need a reasonable amount of computer networking knowledge.

If your home has many users, and some are complaining that their applications are not working, what will you do to resolve this issue? You might need to tweak some of your home router’s configurations, but with no or limited knowledge, the sheer number of configuration possibilities might overwhelm you.

If you are building a WWW website but the client response time is too high, how will you identify and fix the problem? These delays might be at the application level or inside the network. How will you identify which is the case? Again, you need computer networking knowledge.

For building networked applications (which most of today’s apps are), you need sufficient networking concepts. Deeper knowledge will help you expedite issue resolution and become a proficient software developer.

Leslie Lamport, a Turing Award-winning computer scientist, once said: “Distributed system is one in which the failure of a computer you didn't even know existed can render your own computer unusable.” We can extend that to networking as well. A cut in an optical-fibre cable somewhere could cause outages for you and your applications, or slow internet. A few examples are:

The most recent significant undersea cable cut was reported in the Baltic Sea, affecting cables connecting Lithuania and Sweden, as well as Finland and Germany.

In March 2013, a fibre optic cable was cut in the Arabian Sea near Karachi, resulting in a nearly 60 per cent decrease in internet speed across Pakistan. Nearly 50 per cent of Pakistan’s internet traffic faced disruption as SMW4 – one of the four undersea cables that connects the country with the internet – was damaged.

Understanding the nature of our networks helps you to prepare for such events and to react in a rational manner instead of getting frustrated with your service provider about outages or slow network speed.

I hope by now you are convinced that your time spent learning about computer networks is time well spent!

You can support our work by a one-time contribution as well.